.%*. .-.

.%@@@+. .--=%@@@-

=@@@@@@- :--+@@@@@@@@@*

*@%@@@@@%: :--=#@@@@@@@@@@@@#@@+

@@::%@@@@@@-: :::-=%@@@@@@@@@@@@@@%#*- :@*

.@@ =@@@@@@@@@+-:::::::-=*@@@@@@@@@@@@@@@@@@@%##- @@:

#@# +%@@@@@@@@@@@@@@@@@@@@@@@@@@@@@%%#*: -@@

#@- *%@@@@@@@@@@@@@@@@@%%%#= %@=

@@: -=+++== .. @@:

@@ . -@@@ +@@

#@% =@@@@- +@@@@# *+ %@#

#@- -@@@@@@@. +@@@@@% .%@@@@= @@:

@@: +@@@@@@@@@ +@+ +@@@ %@@@@@@@@@ -@@

@@ *@%@* :@@@ *@+ -@@@ %@@@@@@@@@@@# @@@

#@% %@ @% %@@ *@* .@@@ =@@@*@@ :@@@@ @@:

%@:## *@+ :@@ :@@ @@@ %@@: @# .@@@ .@@

@@:. @@: .@@ @@ @@@ %@: @@ @@% @@%

@@ -@@. =@@ @@. @@@ %@. #@- @@# @@.

%@% +@@. @@. %@- @@@ #@ @@. :@@ -@@

%@: +@@+ .@% #@= @@@.@ *@@ +@+ @@*

@@: +@@%. .%% *@+ @@@ %@@ -@# @@

@@ :@@@@@@% :@@ @@% %@@ @# *@@

%@@ #@@@@* @@ @@% %@@. %@. @@:

@@: ===: @@. @@% %@@: =@%. .@@

@@. #@= @@% %@@%+%@#. @@%

@@ +@* @@# -@@@@@* .@@

%@@ :@@ @@# %@@#: =@@

@@: .@@ @@# : @@=

@@. @@. @@# :@@

@@ #@= @@% %@%

#@@ +@+ #@% .@@

@@: =@# +@% =@%

@@ :@% -@@ @@+

@@ .@@ -@: -@@

-@@ @% .: *@#

@@* # @@.

@@ =@@

.@@ @@*

.@@ .@@

*@@ =@#

@@* @@*

@@ -@@

.@@ +@*

.@@ @@-

-@@ =@@

%@% #@*

@@ @@:

@@ +@@

.@@ %@+

:@% @@.

:@% *@%

-@. %@=

%@ @@.

@@ *@%

@@ %@=

@% @@:

:@% +@@

:@* =+- #@+

:@: .%@@@. @@-

-@ =@@@@@# :@@

=@ *@#.=@@% #@%

*@ #@- -@@% %@-

@@ #@: :@@% @@:

@@ #% .@@% .#@@* -@@

@% #% .@@% +@@@@@- %@%

@# %@ :@@% .@@@@@@@@ %@:

@# %@ :@@% +@@% .@@@@ @@:

@# %@ :@@% #@@: :@@@ -@@

@# @@. .@@@ .@@% .@@@= %@%

@# @@. .@@@: %@@- @@@% %@:

@# @@ @@@@ %@@@ *@@@ @@:

@# .@@ @@@@@@@@@@= :@@@ @@

* @@@ :@@@@@@@@. :@@@ *@@

.@@@@@% -@@@@@. .@@@ @@=

@@@@@: .. @@@ @@.

-@%: @@@ @@.

@@@ @@

-@@ =@@

.@@ @@+

.@@:@@.

@@#@-

@@@.

+@-

-

mediocregopher's lil web corner

- The best CMS that only I can use.

There's a joke that devs will spend far more time working on the underpinnings of their blog than they do actually writing blog posts. This has certainly been true for me this week. If I've done a good job it shouldn't be a noticeable change from the outside, but what you're looking at now is essentially a completely different blog than it was a day ago.

This blog started, as many do, as a Jekyll blog hosted on Github. To write posts I would create a markdown file in the blog's Github repo, and once committed Github would automatically regenerate the blog and publish it to a predefined sub-domain.

At some point in the past couple of years I migrated this blog to my home server. Github is still hosting the git repository, but it doesn't do the publishing. Instead, I set up a simple build system based on nix, which automatically generates the blog's HTML files and publishes them. The whole process is described in more detail in a previous post. While the move away from Github has been great, I've begun to feel more and more constrained by Jekyll.

My primary complaint with Jekyll is that there's a lot of friction to creating and editing posts. At a minimum, I need to scp the post to my home server or write it there in the first place via ssh. From there I need to run the build script and then run the mailing list publish script. If there are any assets to accompany the post, like images, that's even more scps and different build scripts. This friction makes writing new posts something I have to set aside time to do, even though I do frequently have smaller one-off things I'd otherwise publish, and rarely with accompanying images that would otherwise spruce things up.

So I decided to get rid of Jekyll, and in this post am going to describe the shiny new framework that I built to replace it.

The most fundamental change which has been made has been to begin using SQLite rather than git for managing posts and assets. Posts and assets are stored in individual tables, with posts being stored in their original markdown form. This arrangement means I lose having a git history of post edits, but I really don't make enough edits for that to bother me. In exchange, I'm able to manage posts and assets without needing to scp/ssh anything. Rather, I designed an API that allows me to do everything over HTTP, which really eliminates a lot of the friction.

The second large change has been to begin rendering everything server-side. Whenever a GET request for a page is made, the server is pulling all data from SQLite that it needs, potentially converting markdown to HTML if the page is a post, and running all the data through a simple set of go templates before being finally sent to the browser. This system is extremely simple in terms of implementation, and very flexible as well.

One nice thing that server-side rendering gives me is the ability to have a nice post and asset management system via my browser. I've left this system open to being inspected, though a password is needed to make any actual changes. On any post in the blog, you can add ?method=edit to the end of the URL and be taken to the edit panel for that page. For example, here is the edit panel for this page. Note the Preview button! The /posts?method=manage panel is used to navigate all the posts, begin creating a new post, and even delete existing posts. New posts automatically trigger an email blast to the mailing list as well. Similarly the /assets?method=manage panel is used for managing assets.

You may notice that, while you can look at these panels, you can't modify anything without a basic auth prompt coming up. Basic auth may seem antiquated and insecure, but it's honestly exactly what I need here. Realistically, requests which modify anything will happen only very infrequently, since they should only be coming from me with long pauses in between. With this in mind, I've backed the basic auth with a bcrypt password and a 1-request-per-5-seconds global rate-limit. This accounts for the security, and the browser will hold onto a correct username/password combo for a decent amount of time so it's not so cumbersome for me. It's not pretty, but the code is.

The final piece of this is the cache. Rendering a page is pretty quick, but it's something that could become a bottleneck if a post is really blowing up (or the site is under attack). With that in mind, I've implemented a simple in-memory cache middleware which applies to all GET requests. The cache is then purged whenever a POST request (aka a request which modifies some server-side data) is made. This may seem drastic, but it's vastly simpler than handling cache invalidation at every individual http.Handler where data is changed. Again, I'm the only one who is making changes, so it's not something that will happen often.

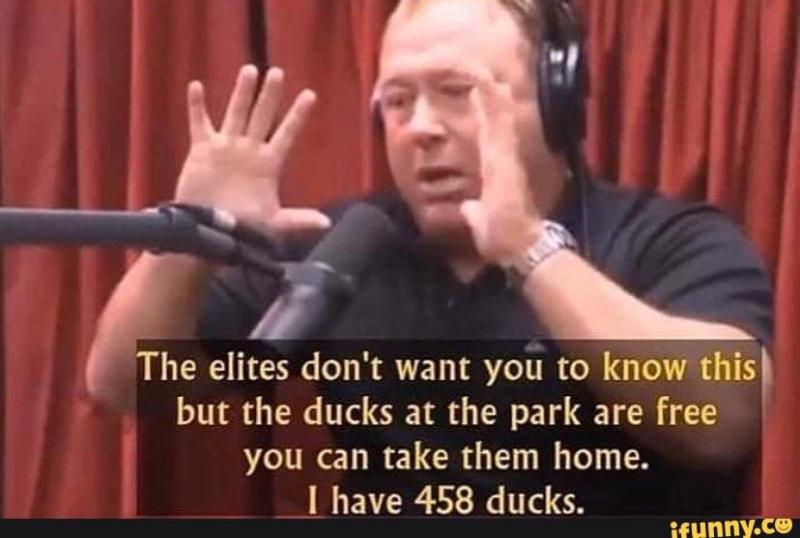

And this completes the tour! It may not be much from the outside, but it's life-changing for me already. Look, a picture!

That would not have been worth the effort before this.

Obviously, this is not the end of the road, there's a lot more I want to do. Hopefully, you stick around to see the rest! Until then look forward to more frequent and lighter posts from me.

Published 2022-05-22

This post is part of a series.

Previously: DAV is All You Need

Next: Another Small Step For Maddy

This site can also be accessed via the gemini protocol: gemini://mediocregopher.com/